Amazon Web Service (AWS) is the most secure and durable cloud platform, offering industry-leading scalability, data availability, and performance. It has several intrinsic features and capabilities to provide the highest levels of data protection against corruption, loss, and accidental or malicious overwrites, modifications, or deletions.

However, security professionals’ concern is reasonable, given that most cloud security failures are due to misconfigurations. We, as customers and users, need to follow best practices to ensure security in the cloud.

We must understand how these features and controls work, learn how to properly implement them in all stages, and build a comprehensive security strategy. Nevertheless, all teams must closely align with best practices and shape the process that supports cloud-native security.

This is why Digital Cloud Advisor was born.

By implementing the best practices based on Well-Architected Security Policies and Principles, we can help you ensure the confidentiality, integrity, and availability of data at all times. By configuring fine-tuned access controls and encrypting data both at rest and in transit, we can assist you in meeting specific business, organisational, and compliance requirements for mission-critical and primary storage services.

From optimising cost to organising data, here’s how we can help you implement audibility, visibility, agility, and automation in your cloud infrastructure to uphold the company’s sustainable objectives and applicable laws and regulations

Ensuring fine-grained Access Control

Proper access control is fundamental in reducing security risk and the impact that could result from errors or malicious intent. Fine-grained access control in Amazon S3 allows for more precise control over who can access specific objects within a bucket.

By implementing the “Least privilege “lege” access mo” el, we can ensure that the user has only the minimum permission to perform the needed task. For example, if a user should have access to read objects from the bucket but not have permission to delete objects from the bucket, then to maintain the least privileged access, you need to ensure that you didn’t grant permission.

There are several ways to implement fine-grained access control in Amazon S3, such as:

- Bucket policies are JSON documents that specify which AWS accounts or IAM users can access a specific bucket or object. You can personalise bucket access to help ensure that only those approved users can access resources and perform actions within them. With bucket policies, you can use conditions to limit access to your bucket based on things such as an allowed range of IP addresses that a request must originate from or enforce that all requests to the bucket must use multi-factor authentication (MFA).

- Identity & Access Management (IAM) Policies: IAM policies allow you to specify granular access permissions for AmazonS3 resources. For example, you can create a policy that will enable spell IAM user or groups to read objects in a particular bucket but not write or delete objects. You can also use IAM policies to specify conditions under which a user can access resources, such as only allowing access to specific prefixes or keys within a bucket. Additionally, you can use IAM policies to restrict access to particular addresses or VPCs. With these capabilities, you can use IAM policies to provide fine-grained access control for S3 resources.

- IAM roles for applications and AWS services that require Amazon S3 access: Applications on Amazon EC2 or other AWS services must include valid AWS credentials in their AWS API requests to access Amazon S3 resources. We should not store AWS credentials directly in the application or Amazon EC2 instance. These are long-term credentials that are not automatically rotated and could have a significant business impact if they are compromised.

Instead, we should use an IAM role to manage temporary credentials for applications or services that need to access Amazon S3. When we use a role, we don’t have to distribute long-term credentials (such as a username and password or access keys) to an Amazon EC2 instance or AWS service such as AWS Lambda. The role supplies temporary permissions that applications can use when calling other AWS resources.

- Amazon S3 Access Control Lists (ACLs): An ACL is a set of rules that define which AWS accounts or IAM users have.

- VPC-specific access points: A VPC endpoint for Amazon S3 is a logical entity within a virtual private cloud (VPC) that allows connectivity only to Amazon S3. A VPC endpoint can help prevent traffic from potentially traversing the open internet and being subject to an open internet environment.

- Presigned URLs to Grant Temporary Access: Presigned URLs can grant temporary access to a specific object or allow a user to upload an object to an S3 bucket without having to grant permanent access to the bucket. Presigned URLs are helpful if we want our user/customer to be able to upload a specific object to our bucket but don’t require them to have AWS security credentials or permissions.

Using a combination of these methods, Digital Cloud Advisors can help you ensure that only the right people have access to specific objects within a bucket and that any changes to access permissions are tracked and auditable.

Protecting Data at Scale

Once data is uploaded to the S3 bucket, further actions must be taken to ensure protection against data corruption, loss, malicious or accidental removal or modifications. Our security experts can protect your S3 data by implementing various security measures. These include but are not limited to:

Encrypting the data both in transit and at rest

Encryption can help protect your data even when your account access has been compromised. Depending on your security and compliance requirements, we can help you decide whether to use server-side encryption (SSE), client-side encryption (CSE), or both of these techniques to encrypt your data on AWS.

To protect data while in transit, we can encrypt data using Secure Socket Layer/Transport Layer Security (SSL/TLS) or client-side encryption to help prevent potential unauthorised users from eavesdropping on or manipulating network traffic using man-in-the-middle or similar methods. To ensure that we should only allow encrypted connections using HTTPS over TLS, we can enforce end-to-end encryption on all traffic to a bucket through bucket policies.

Enabling Amazon S3 Versioning

Amazon S3 versioning allows you to preserve, retrieve and restore every version of every object in your S3 bucket. This enables you to retrieve previous versions of an object that may have been overwritten or detected. With versioning, you can quickly recover from unintended user actions and application failures.

Using Amazon S3 Replication

Amazon S3 Replication enables automatic, asynchronous copying of objects across Amazon S3 buckets. Amazon S3 replication enables automatic, asynchronous copying of objects across different AWS regions or the regions they currently reside in. All data assets are encrypted during transit with SSL to help achieve the highest levels of data security. With Amazon S3 Replication, we can protect our data and meet compliance and risk models that require replication of data assets to a second geographically distant location.

Using Multi-Factor Authentication (MFA) Delete

MFA delete is a feature in Amazon S3 that requires users to provide a valid multi-factor authentication (MFA) code to delete objects from a designated S3 bucket. By enabling MFA delete, you can add an extra layer of protection for your data stored in the S3 bucket and ensure that only authorised users with the appropriate level of access can delete objects.

Enabling S3 Object log

Amazon S3 object-level logging is a feature that allows you to track and audit access to your S3 objects. It records detailed information about object-level operations such as GET, PUT, DELETE, and LIST in your S3 bucket.

Logging and Monitoring

Security breaches don’t always happen all at once, and monitoring can prevent a breach before it occurs or identify holes to prevent further issues. Depending on your business requirement, several tools and services can be deployed to help continuously monitor and audit our service and usage, enabling more robust governance, compliance and risk assessment. For example,

- Gain visibility of sensitive data and Security posture with Amazon Macie

- Monitor and detect unusual activity with AWS CloudTrail

- Monitor resource configuration with AWS Config

- Monitor and manage S3 using Amazon Could Watch

- Check permissions and Server logs with AWS Trusted Advisor

- Monitor records for the requests Server Access logs

- Detect threat using Amazon GuardDuty

- Monitor replication and encryption status using Amazon S3 Inventory

By designing and implementing logging and monitoring workflow, the Digital Cloud Advisors team can help you reduce the time to detect threats in your cloud environment.

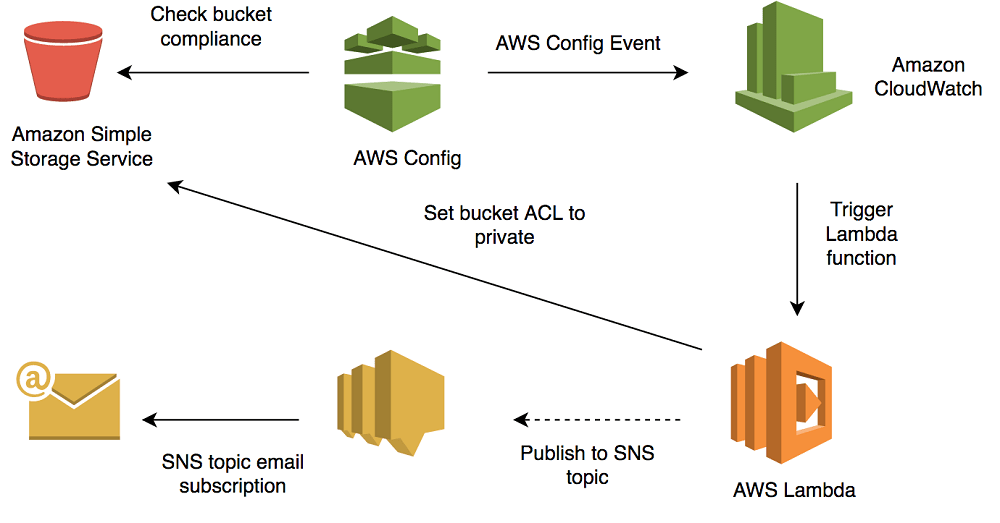

Enabling Automation

Once access control, data protection, logging, and monitoring are set up properly, it is important to automate your security tasks. Automation enables proactive detection and correction of security issues before they get into production. Done properly, automation can free up security professionals from mundane tasks so they can focus on higher-value (and often more interesting) challenges.

We can support you in developing and implementing various event-driven architectures that automatically notify administrators of potential security breaches, such as unauthorised access attempts or changes to S3 bucket policies. It will alert you about possible security vulnerabilities or threats. For example, we are checking for publicly accessible S3 buckets or buckets configured with overly permissive access control. Our highly skilled IT experts can help you enable automation to automatically implement security best practices and compliance checks to guarantee that data stored in S3 conforms to industry and regulatory standards.

Backup and disaster recovery

Regularly backing up your data to multiple locations and having a disaster recovery plan can help protect against data loss. There are many ways to set up backup and disaster recovery for data stored in Amazon S3. For instance, Amazon S3 Lifecycle policies can automatically move data to the S3 Glacier or Glacier Deep Archive storage class for long-term and archival retention. Additionally, you can use Amazon S3 Cross-Region Replication to replicate data across different S3 buckets in various regions. This can help protect against data loss due to regional outages or disasters.

Digital Cloud Advisor can help you devise the best backup and disaster recovery plan for your infrastructure, optimising your costs while maintaining security and compliance requirements.

Train your employees

As mentioned earlier, training employees on security best practices is critical to protecting data susceptible data and maintaining a company’s overall security. Training your employees on security and best practices reduces the risk of data breaches, which can otherwise result in significant financial losses and reputational damage.

Digital Cloud Advisor can help you regularly train your employees on security best practices and ensure they know the risks and how to mitigate them.